Data-driven marketers are focused on taking an analytical approach to campaign strategy and measuring marketing performance. That’s why so many marketers are intrigued by the idea of incorporating direct mail testing as a tool to create continuous improvement and a deeper understanding of what motivates their audience to respond.

But how realistic is the idea of incorporating direct mail testing strategies into an ongoing process, when the delivery media is a printed piece of paper?

In today’s online world, it may seem as if testing and analysis of marketing performance started with websites and email, and then exploded with the proliferation of social networks and mobile.

However, the first truly data-driven marketing strategies were in place decades ago. That’s when most advertising used mass media to reach broad audiences — with results mostly unattributed to any marketing channel.

Yet even more than a century ago, direct mail offered marketers an opportunity to communicate on a one-on-one basis, to gather data and take advantage of a perfect laboratory to experiment with lists, offers, creative tactics, and more.

And that’s what direct mail testing really is – running experiments to refine campaigns, identify new market segments, and customize messages and offers to those segments.

Indeed, not only is testing a best practice for direct mail, not testing can be a huge mistake that gives your competitors an edge.

A new laboratory for direct mail testing strategies

Today it’s easy to create a split-run A/B test in online media – for emails, landing pages, and digital ads. Plenty of tools are available to run marketing tests with the click of a button.

But in the offline environment of direct mail, testing once seemed to be far out of reach for all but the largest mailers.

Before automated direct mail solutions such as Postalytics, testing required coordinating multiple manual processes, working with printers and mailers to make sure the right mailings were sent to the right people, reviewing proofs to confirm that the variables being tested were correct, and dealing with plenty of paper and phone calls to evaluate response and results.

Yet new automated tools eliminate all these tedious manual processes and complexity. Today you can send direct mail — and more importantly, use it to test marketing ideas — as easily as you can for digital advertising.

Direct mail testing should be an ongoing process

“I’d like to do a test” is something I hear often, but it misses the point. That’s because testing isn’t just a first step to incorporating direct mail into your marketing, but an ongoing process that builds a long-term competitive advantage for those that commit to it.

The most successful direct mail marketers are constantly testing various offers, lists, and tactics.

Every direct mail campaign should include some form of test because you never know when the results will surprise you with insights that can be highly profitable — such as identifying new markets, introducing new products, and segmenting your marketing with a lot more precision.

I know of one mailer who discovered that an alternate design option that emphasized the call to action on a postcard led to a 47 percent higher response rate on their personalized URLs!

How does the Direct Mail Process Work?

What to test? Develop a hypothesis

Direct mail advertising is certainly both an art and a science. Nearly 100 years ago, Claude Hopkins wrote about the science in his classic book Scientific Advertising. That influenced advertising legend David Ogilvy, who said that “nobody should be allowed to have anything to do with advertising until he has read this book seven times.”

Science, of course, is based on theories. So a scientific test requires that you develop a hypothesis – and then design a test to prove or disprove it. You phrase your hypothesis in a specific way that makes it clear how you’ll run your test and draw conclusions.

Examples of how a hypothesis might be phrased include:

- A letter package will outperform a postcard

- Older people respond more frequently to a more specific offer

- A picture of a person using your product delivers more response than a picture of just the product

In general, direct marketers test audience, offers, messages, and creative — although the audience (lists and list segments you use) and the offer impact performance the most, so they are often tested first.

Testing audience: Which lists work best?

The mailing lists you use can make a significant difference in your results — not just the list itself but specific segments of that list. These tests can deliver insights that not only increase response, but reduce your cost per response so your budget works harder.

Learn How To Build The Right Mailing List

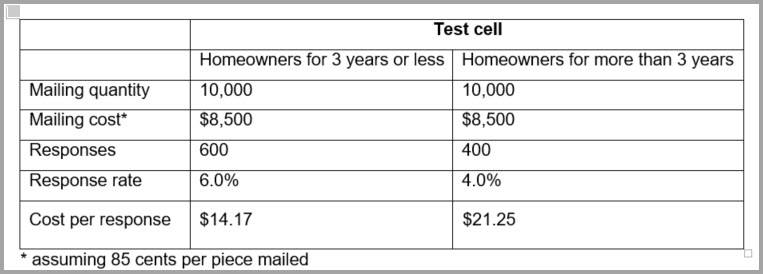

Let’s say that your offer appeals to homeowners, but think that the offer might be more appealing to new homeowners than to those who’ve owned their home for many years. You’d need to create a specific hypothesis — for example, that response will be higher from those who’ve owned their home for three years or less compared to those who have owned their homes longer.

Then you can set up a split-run direct mail test – using the same offer, creative, and format – with a different audience being your test variable. You’d send one mailing of two “test cells.” Perhaps each cell would be 10,000 pieces. Then after analyzing your response, you might see results like this:

It appears that your hypothesis is correct. You’ve generated 50 percent more responses from the newer homeowner group and your cost per response is about 33 percent less — $14.17 vs. $21.25.

It could be that while you’re glad that you can generate responses for $14.17 among one group, a $21.25 cost per response may still be acceptable and profitable, but wouldn’t you make budget allocations a bit differently knowing this information?

Perhaps you’d mail more often to the better performing audience. Or you might adapt your offer and product to be more appealing to those who have owned their homes longer. (Of course, that would be a hypothesis that you’d need to test!)

Testing offers: Profiting from better leads and higher conversions

Whether your audience is a general one, or represents a highly specific niche, your offer accounts for a significant portion of your response, because that’s what drives a prospect to respond.

The better the offer, the higher the response – but be careful when creating offers. If you give too much away or the offer is unrelated to your product or service, you might generate way more responses, but ultimately end up with far fewer sales.

Let’s see how this works by looking at a business-to-business example. Assume you are selling a cloud-based solution for workflow collaboration that requires one or more sales presentations to multiple decision makers to close a deal. Learn more about B2B direct mail lead nurturing here.

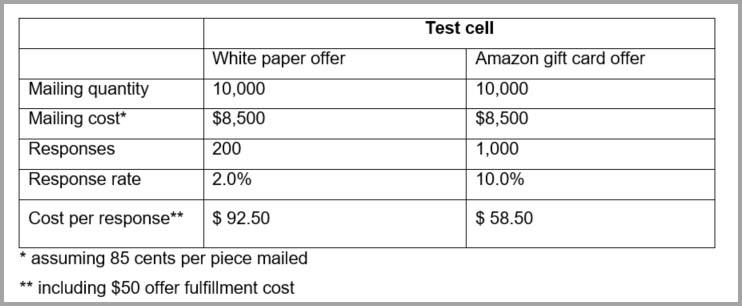

One offer might be for a white paper on workflow collaboration, perhaps one published by a major consulting firm that usually costs $50 but that you’ll provide free in exchange for a response. Another offer might be for a $50 Amazon gift card. Both offers have similar value, but which will perform better?

To test these offers, you’d keep all other aspects of your mailing the same (list, creative, etc.), but vary the offer and create two test cells as in the previous example. It’s highly likely that the gift card offer will generate much higher response. Perhaps the results might look like this:

Initially, it seems as if the gift card offer works best, but how many sales can you expect? Probably less because too many people will only want the gift card and don’t even really care about workflow collaboration.

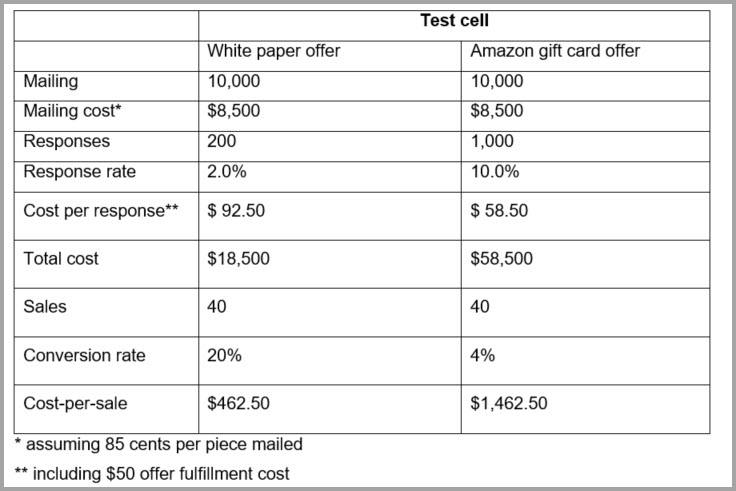

But those who respond to an offer for a white paper on the topic are far more likely to be evaluating solutions like yours. Converting those leads to sales would be a lot easier – and ultimately it’s your conversion rate that measures direct mail success.

Consider how the results above might look if you add a conversion-to-sale analysis:

Not only can you reduce your final cost per sale, you make it a lot easier for your salespeople who do not need to be burdened with hundreds of additional leads that may be nearly useless.

Other direct mail testing ideas: Message, creative and format

According to the 40-40-20 rule, 40 percent of the success of your direct mail program comes from your list, another 40 percent from your offer, with the remaining 20 percent from other attributes including copy, design, message, and format.

These are certainly worth testing as you continue to improve the performance of your direct mail campaign. (See 11 Tips For Writing Persuasive Direct Mail Copy)

And because direct mail is a one-to-one communication channel, you can vary creative based on audience, adjust your audience based on offer, and plenty more. You don’t even need to confine your program to one creative format.

For example, you may find a postcard appeals more to one audience segment, while a letter package works better for another segment — delivering an overall lower cost per response.

Testing significance: Is your direct mail test valid?

As in any scientific experiment, you want to validate your test results to determine they prove your hypothesis and aren’t based on some random quirk.

Statistical significance is too complex a topic to fully cover here, but you need to make sure you send enough mail to be confident that repeating your test would deliver similar results. There are, after all, random factors that can creep into any marketing test and create varying results.

How much variance can you expect? Let’s say that one test cell delivers a 5% response. Might that same mailing deliver 2% response the next week and 8% the next? If your test is set up correctly, that’s unlikely. But variance ranging from 4.5% to 5.5%? That’s far more likely. In general, the more mailings per test cell, the more confidence you will have in your results.

To see how this works, consider a non-direct mail hypothesis. You have a coin, but suspect it’s weighted so that when you flip it, the coin lands on heads far more often than it should. Is the coin fair? Well, you’d flip it to see – but how many times do you need to flip the coin?

- If you flipped it 10 times, there’s a 20% chance you’d get 6 heads (60% of your 10 flips). There’s about a 10% chance you’d get 7 heads (70% of your 10 flips).

- If you flipped it 100 times, there’s only a 1% chance you’d get 60 heads (60% of your 100 flips). Getting 70 heads (70% of your 100 flips)? The odds are much less than 1% — about 1 in 43,000!

In marketing terms, the more you mail — the higher your sample size — the more likely it is that randomness is less of a factor in your response and the range you can expect for future mailings is fairly narrow. If your test cell is small, that variance can widen.

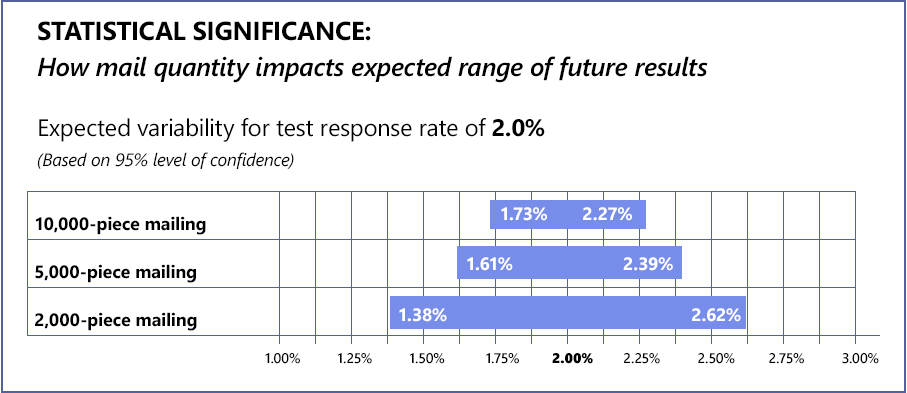

Let’s say you send a mailing and receive a 2% response. This chart shows how the size of your mailing impacts statistical significance:

If you mailed 10,000 pieces, you could expect that a repeat of the test would deliver results between 1.73% to 2.27% (at a 95% level of confidence, usually considered high enough for marketing tests).

But if that test cell contained only 2,000 pieces the range grows – widening to from between 1.38% to 2.62%. A 5,000-piece mailing? That would be somewhere in the middle.

Also consider that the higher your response rate, the more confidence you’ll have in the results. For example consider mailing that receives a 5% response rate. A little variance here and there doesn’t matter. For a response rate of 1%, even a small variance could create a more significant impact on your direct mail performance.

There are calculators available online that you can use to evaluate statistical significance. These include the MWD statistical calculator and the RR Donnelly statistical significance calculator for example. But in general, follow these guidelines:

- Make sure each test cell contains enough mailings to give you statistically reliable results

- Don’t roll out promising test results too quickly, but do so gradually as you gain more confidence in the results

- Be more careful with lower response rates. Even if a tiny response rate is profitable, you need more data to be confident in the difference between a 0.1% and 0.2% response rate than for the difference between a 1% and a 2% response rate even though both represent one test cell doubling the results of the other.

Don’t stop testing: Use automated technology to reveal even more profitable opportunities

Direct mail automation tools like Postalytics eliminate cumbersome and tedious manual processes for creating and deploying campaigns. In the same way, they also make the testing process of a lot easier. Here’s just a few ways that marketers are using Postalytics tools to deploy direct mail tests in just a few minutes:

- Using built-in list tools to instantly create two audience segments — to test factors such as consumer age, income level or homeownership, business size, location, or industry.

- Making an instant copy of a postcard template and swapping out the offer to test which offer works best for a particular audience.

- Testing a letter format vs a postcard, and deploying the campaigns in minutes.

Above all, keep testing. It’s not merely one step in direct mail planning, but an ongoing process. After all, you never know when a specific test will reveal how your direct mail can perform far better, reduce your costs, and boost your profits.

About the Author

Dennis Kelly

Dennis Kelly is CEO and co-founder of Postalytics, the leading direct mail automation platform for marketers to build, deploy and manage direct mail marketing campaigns. Postalytics is Dennis’ 6th startup. He has been involved in starting and growing early-stage technology ventures for over 30 years and has held senior management roles at a diverse set of large technology firms including Computer Associates, Palm Inc. and Achieve Healthcare Information Systems.